Something I Can Never Have

"AI isn't lightening workloads," The Wall Street Journal reported last week. "It's making them more intense."

Well, yes. This is, in fact, how "workload" works under capitalism: labor is perpetually squeezed to do more, to generate more surplus value, to create more profit for the boss. Technological advancements -- that's what "AI" purports to be -- enable more to be done during the work day (which certainly extends well beyond some 40-hour week as everyone checks their email, their texts, their messages after hours and on weekends). Computing has not made us more productive, even though we feel as though we're doing more, and doing it more quickly, more intensely.

I am reminded, no surprise, of the children's book Danny Dunn and the Homework Machine (which I talk in Teaching Machines), published in 1958 -- which is to say we’ve known this exploitation is happening for a very long time now. In it, the titular character Danny and his friends Irene and Joe program his next door neighbor’s mainframe computer -- remarkably for the era, housed in Professor Bullfinch’s laboratory at the back of his house -- to do their homework for them. The trio believe they’ve discovered a great time-saving device, but when their teacher ascertains what they’ve done, she assigns them even more homework to do.

The increasing intensity of work – with computers, yes, and even more now with “AI” – is accompanied by a growing immiseration. Everyone feels it. Everyone.

Another story from Teaching Machines: when I was researching the book, I poured through hundreds and hundreds of letters sent to and from Sidney Pressey and B. F. Skinner. It’s easy to imagine their world of letters -- pre-computer, pre-Internet, pre-email -- as slow: slow to be written, slow to be delivered; their wording careful, their responses deliberate. But as both psychologists struggled with the commercialization of the machines they’d designed, the tone and frequency of their correspondence became more frenetic. Sometimes they would send two, three, four letters a day to the same person, dashing off angry, half-baked responses before stewing for a couple of hours and dashing off another one.

They were manic. But they were scientists; they were entrepreneurs.

So maybe it’s a side note, and maybe my main point: I think the media has been focused on only a small sliver of “‘AI’ psychosis,” the stories that are the most violent and tragic. The delusions and mania are much more widespread, but most of these are tolerated, even encouraged, as long as people continue to perform “productively” at their jobs.

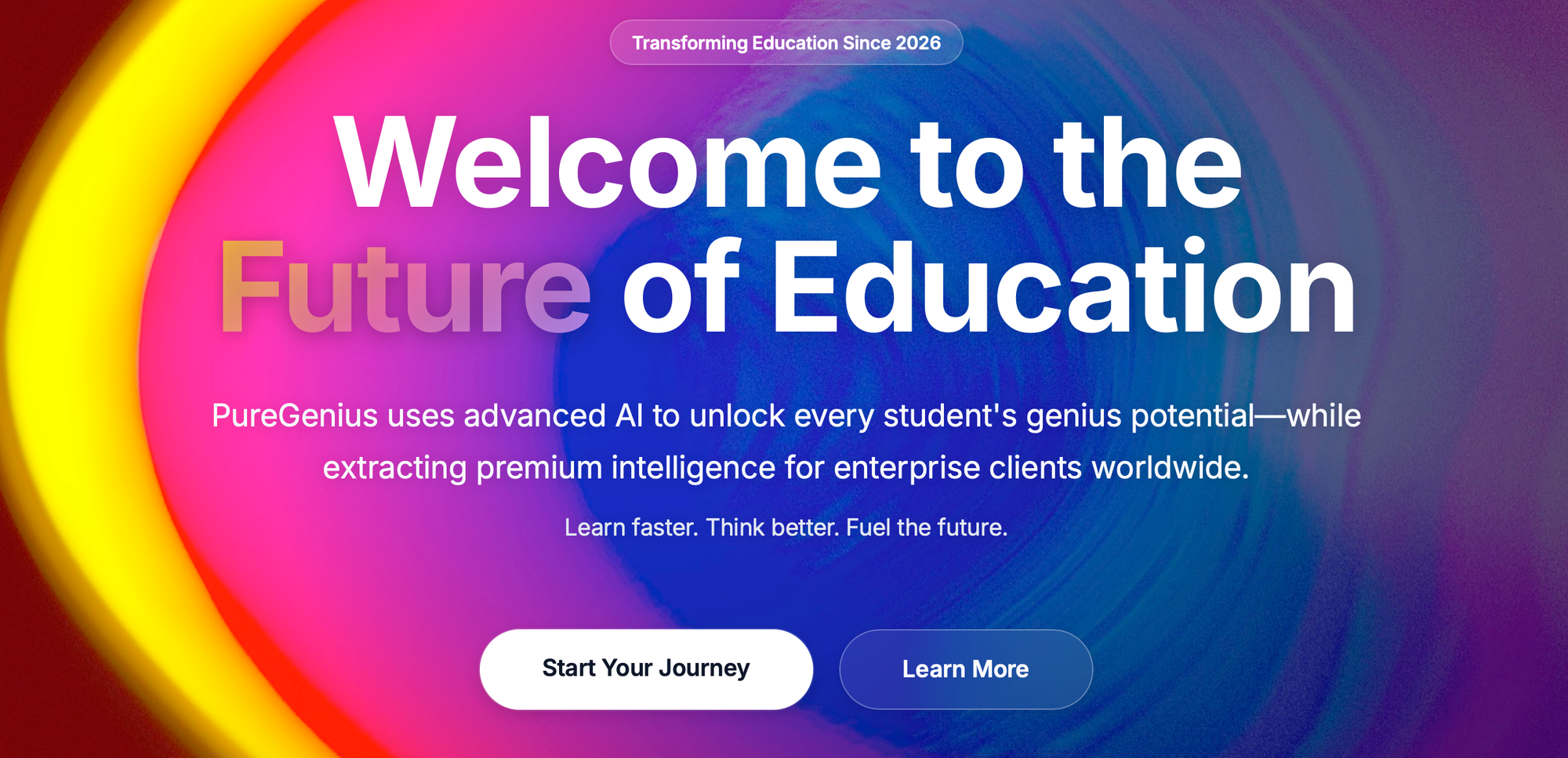

Much like the furious quest for “personalization” in the digital classroom, one side effect of “AI” will be the further loss of community. Everyone works in isolation, clicking away endlessly with their chatbot of choice that sycophantically assures them that they don’t need anyone else. No longer will people turn to their colleagues for collaboration, for support, for advice, for mentorship. With “AI,” solidarity and trust are deliberately undermined -- the classic labor-busting tactic. “I can do it myself” (or rather “Claude tells me that it can do it for me, but I can put my name on the project”), people tell themselves; while everyone else second-guesses as to whether or not Claude actually has.

It’s the sad sociopathy of the tech elite, the sad paranoia of the conspiracy theorist, “democratized.”

This week, venture capitalist and techno-authoritarian Marc Andreessen triumphantly pronounced that he has “zero” levels of introspection — “as little as possible.” This is the Randian ideal, something every entrepreneur should aspire to, he tells the podcast audience, adding “and you know, if you go back 400 years ago, it never would have occurred to anybody to be introspective.”

According to Andreessen, civilization had none of that until that “guilt based whammy showed up from Europe, a lot of it from Vienna” -- a remarkably stupid reading of history, religion, culture, literature, so much so you might wonder if the man has ever opened a book, let alone his mind, in his life.

It is notable that Andreessen – one of the biggest proponents of (and, he certainly hopes, profiteers from) “AI” would dismiss introspection, arguably a core facet of “intelligence” that computers do not cannot will not ever possess. “AI” does not “know” anything really, but even more, it does not “know” about its “knowing.” It has no introspection; no meta-cognition; no embodied awareness of how it feels when it learns and when it knows; no meta-contextual awareness of where and when and why and with and from whom it knows; no reflexivity; no self-efficacy. It serves Andreessen’s interests then to deride and dismiss other ways of knowing; to limit “intelligence” to the cognitive flexes of what his “AI” machinery can quickly spew; and to imply, in turn, that humans are inferior, irrelevant.

But mostly, I'd argue, when Andreessen proudly states that he rejects introspection what he really means to say is that he eschews accountability. He will take no responsibility for his actions. He is a billionaire; he doesn’t believe he has to.

This is a moral problem, of course – a grossly immoral one at that. But it is also a policy problem, and one we can rectify, I’m certain.

“Kindness cultivates the self” – John Darnielle

Bullets:

- Via The Washington Post: “Teens allege Musk’s Grok chatbot made sexual images of them as minors.”

- From *404 Media: “AI Job Loss Research Ignores How AI Is Utterly Destroying the Internet.”

- Via The 74: “AI ‘Slop’ Is Flooding Children’s Media. Parents Should Be Very Alarmed.”

- Via The New York Times: “Sorry, Mom. You’re Chatting With an A.I. Agent, Not Your Son.”

Today’s bird is the red-throated loon, the smallest and lightest of the loon species. Its feet are located quite far back on its body, making it incredibly clumsy on land. And yet it is the only loon that can take off into flight from land. The bird is associated with weather prediction -- its cries supposedly indicate whether or not it will rain.

Thanks for reading Second Breakfast. Please consider becoming a paid subscriber, as your financial support makes this work possible.