Foolin'

Kin and I have a nice little collection of vinyl -- mostly jazz, but music from other genres too, including a few beloved albums from our teens and twenties.

He had just set the needle down on one of these when I walked in the door, home from my morning run. I recognized it from the first notes of the first track: Def Leppard's Pyromania. ("Rock! Rock! 'Til You Drop,” in case you've forgotten.)

I recognized the music; I knew all the lyrics to every single song; and when I boasted as much, Kin challenged me, “Name all the band members,” knowing I’m sure that I could. And I did, no Googling necessary: Joe Elliot, Steve Clark, Phil Collen (not to be confused with Phil Collins from Genesis), Rick Savage, and Rick Allen. I could cite other trivia too: the band were from Sheffield, England. It was in between the release of this album and their next, Hysteria, that the drummer, Rick Allen, lost his arm in a car accident. (I know all the lyrics to that album as well.) The band had a Vegas residency earlier this year; how or why I know this, I really cannot tell you.

I sometimes joke that my brain is full of all sorts of stuff like this -- useless stuff, one could easily dismiss it as -- which takes up the brain-space in which I could have, should have used to preserve other, more important information, such as everything I learned in math class in 1983, the year that Pyromania was released.

But -- record scratch -- that's not how the brain works. Facts and memories are not files stored in a database, and there is no "storage full" warning when you hit capacity. There is no storage capacity. You don't have to delete old knowledge -- say, functions, which I’m certain that, at some point in eighth grade, I did know how to solve -- in order to make room to stick new knowledge in your head.

The brain is not a computer. The computer is not a brain.

I recognize that this has been the dominant metaphor for the last seventy years or so, but it's become far too easy to blur analogy and actuality. And "AI" is only making it worse, particularly as the industry’s hustlers try to convince you that you don’t even need to stick new stuff in your own head anymore; you can just plop it into an LLM and magically, personal knowledge will emerge.

Def Leppard played in my hometown of Casper, Wyoming in August 1983 (Autograph, with their one-hit-wonder “Turn Up the Radio,” was the opening act). This was the night before Picture Day -- I wasn't allowed to go, but I remember this anyway because so many classmates wore that sleeveless Union Jack concert tee the next day that, if you were to judge by the photos in our yearbook, you'd surmise that the flag was our school uniform.

A friend I went to junior high with recently posted on Facebook that she had no idea the band was British. I was taken aback. “What about all those Union Jack t-shirts people wore in eighth grade?!” I asked. She said she hadn't noticed. And fair enough, maybe I noticed because my mom is British (something that was deeply “foreign” in Wyoming), and the symbol was noteworthy to me.

There are other reasons why Def Leppard matters to me a little more than maybe it should. Pyromania was the first album (a cassette, to be precise) I bought with my own money, hard-earned from babysitting. I listened to it over and over and over again, admittedly a little annoyed that my favorite song was the second song on the second side which required a great deal of fast-forwarding and rewinding to locate and replay.

But replay I did. That's how I learned the lyrics. (There were no liner notes.) That's surely why I remember the lyrics. Repetition reinforces.

But every repetition, every resurfacing of a memory – this memory, that memory – changes that memory too because the brain is not a computer. Every time you remember something, you are a different person (even if you're still the person whose teenage music tastes were unfortunately formed by a lot of Mutt Lange productions).

A computer, as Clive Thompson once swooned in an article in Wired – “Your Outboard Brain Knows All” – has “the perfect recall of silicon memory.” It's “an enormous boon to thinking,” he insisted, before going on in the next sentence to confess something that quite contradictory: he'd already forgotten much of what he’d written and published. We’re supposed to take comfort, I suppose, that it’s all still online – at least for as long as the Wired archives remain available and accessible, of course.

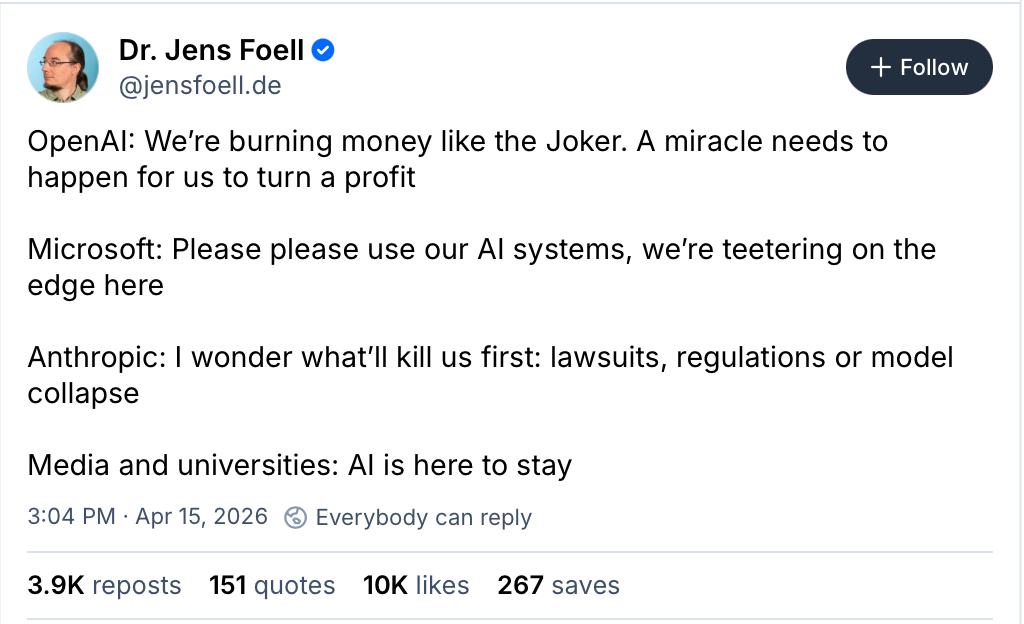

Perhaps, as more and more of the Web disappears, as sites have gone offline and links decayed, those who’ve proudly stated they were relying on it as their “outboard brain” might be the first to speak up and caution against depending too much on the latest “outboard brain,” the LLM. This seems particularly wise as “AI” companies are all incredibly over-extended financially, instituting rate limits on just how often you can use the technology – not because demand is so high as much as they can't afford for you to do so. (Just imagine having to wait a few days before you can “remember” or “think” or “write” because you’ve already burned through all your allotted tokens. Seems... dumb?)

Computer memory is all ones and zeros, closed or open and nothing in-between. Human memory, human knowledge is nothing like that. Anything, everything we know and remember is so much more complex, as our memories are chemical and neurological, not binary code; our bodies are biological, not mechanical. Our memories and our thoughts – what and how and why we remember and know – are always changing, are always in the process of being refreshed, renewed, expanded, revised. Our ideas are contingent on our knowledge and our experiences – on our embodied experiences, what has happened and what is happening in our bodies, including and beyond our brain.

(The Pyromania I know now is not the Pyromania I knew then. And ooof, maybe this wasn’t the best hook to hang this essay on.)

Our understanding of the world -- knowledge, memories, skills -- are never, as are the versions of these things fixed in print or in the machine, inert. And importantly, the more we know, the more we practice knowing – thinking, reading, writing, imagining, talking to one another – the more we strengthen our ability to know.

And the inverse is true too: the less we practice, the weaker our cognitive powers. The more superficial and scattered our mental activities – skimming, clicking – the more shallow our thinking. The more we “outsource our thinking” to “AI” (hell, to the computer or the Web), the more we might find ourselves unable to think deeply at all.

Of course, the promoters of “AI” are quite insistent that their technologies “think” more quickly, more deeply, and more consistently than do humans. As such, they argue, it’s best for us all to simply hand over our intellectual tasks to their machinery – machinery like Notebook LM, for example, in which you can upload all sorts of documents to be indexed, summarized, and then queried for “the answers.” Or machinery like Otter.ai, just one of many note-taking apps that says it can transcribe and summarize meetings or classes, as well as to provide a list of “key takeaways” and “action items.”

The emphasis is always on the product – some sort of digital output that will, in some circumstances, let you put a little checkmark on your task list.

The notes. The summaries. The essays. The answers. The insights.

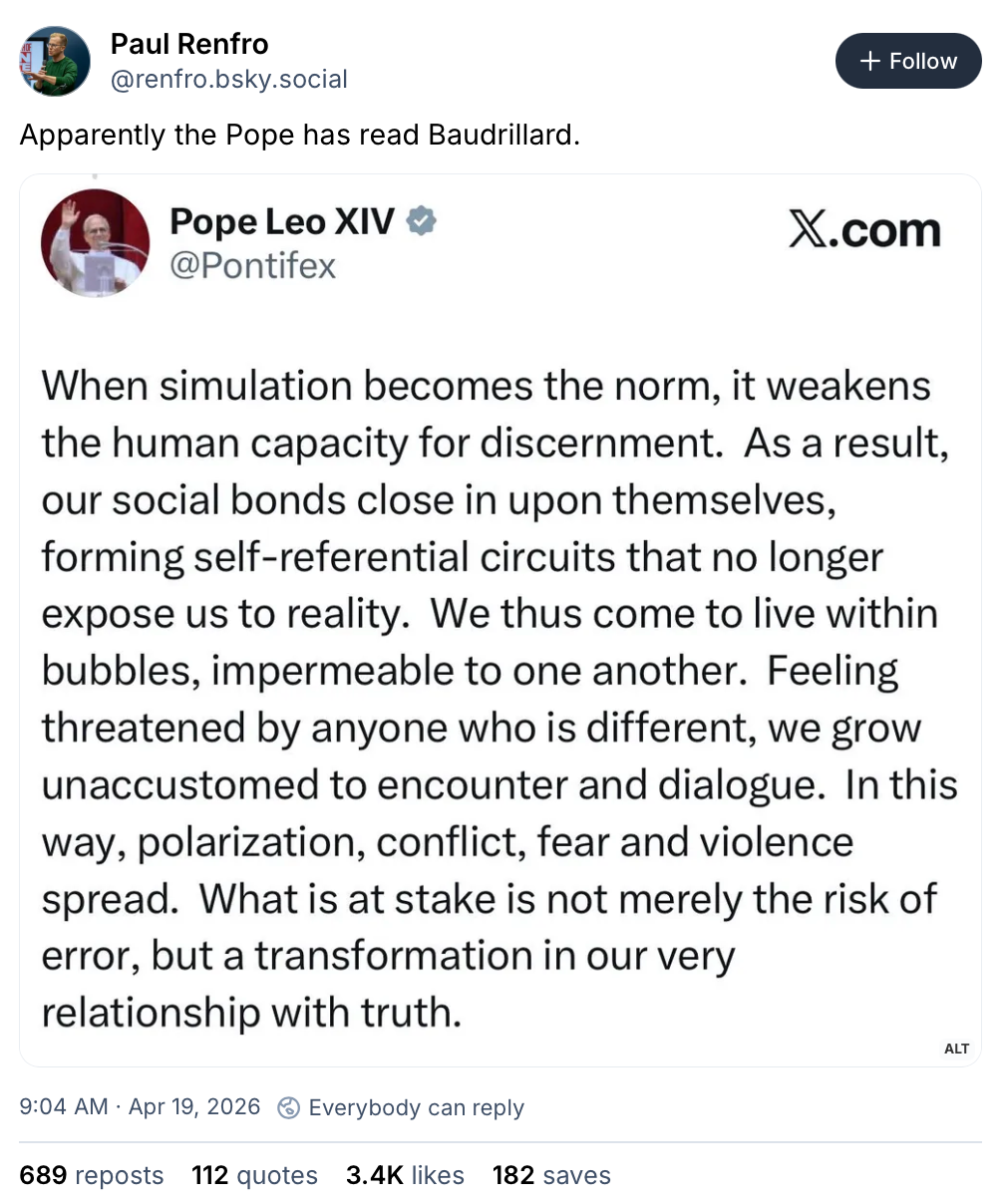

There's a product, but there is no process for you, the user. No discernment, no contemplation. No recollection or consolidation of earlier thoughts and ideas and memories. No cognitive effort through which you will think or learn or know or grow or ever remember any of this.

Long Live Rock-n-Roll:

- “Students are speeding through their online degrees in weeks, alarming educators” via The Washington Post

- “Reinventing the Wheel, Again” by Glenda Morgan

- “How Professional Wrestling Prepared Linda McMahon for Trump’s Cabinet” by Zach Helfand

- “Whose while are you worth?” asks Julia Freeland Fisher

- John Warner argues that “infinite patience is not good for education”

- “Los Angeles becomes the first major school district to require screen time limits” via NBC

- “New Gas-Powered Data Centers Could Emit More Greenhouse Gases Than Entire Nations” via Wired

- Imagine my surprise to read this piece in The New Yorker by Jessica Winter – “What Will It Take to Get A.I. Out of Schools?” – and find my work is cited.

Today’s bird is the egret, from the Old French aigrette. Not to be confused with regret, from the Old French regreter.

Thanks for reading Second Breakfast. Please consider becoming a paid subscriber. Incredibly – 1000+ words on Def Leppard, I know, I know – this is how I make a living.